I had been to an event in the east of London which finished late and required an overnight stay up in town. Instead of staying near the venue, I stayed back in Lambeth to be located conveniently for getting home the next morning. When I got back to the hotel, I went up to my room. I hadn’t really paid much attention to the view from the room when I had been there earlier but, now that it was dark, the illuminated view of the city caught my eye. The view across to the Palace of Westminster looked really nice and the blocks of flats in front looked far better. Getting shots through multiple layers of glazing with such contrast is always a bit of a mess but the overall result was not too bad.

I had been to an event in the east of London which finished late and required an overnight stay up in town. Instead of staying near the venue, I stayed back in Lambeth to be located conveniently for getting home the next morning. When I got back to the hotel, I went up to my room. I hadn’t really paid much attention to the view from the room when I had been there earlier but, now that it was dark, the illuminated view of the city caught my eye. The view across to the Palace of Westminster looked really nice and the blocks of flats in front looked far better. Getting shots through multiple layers of glazing with such contrast is always a bit of a mess but the overall result was not too bad.

Category Archives: technique

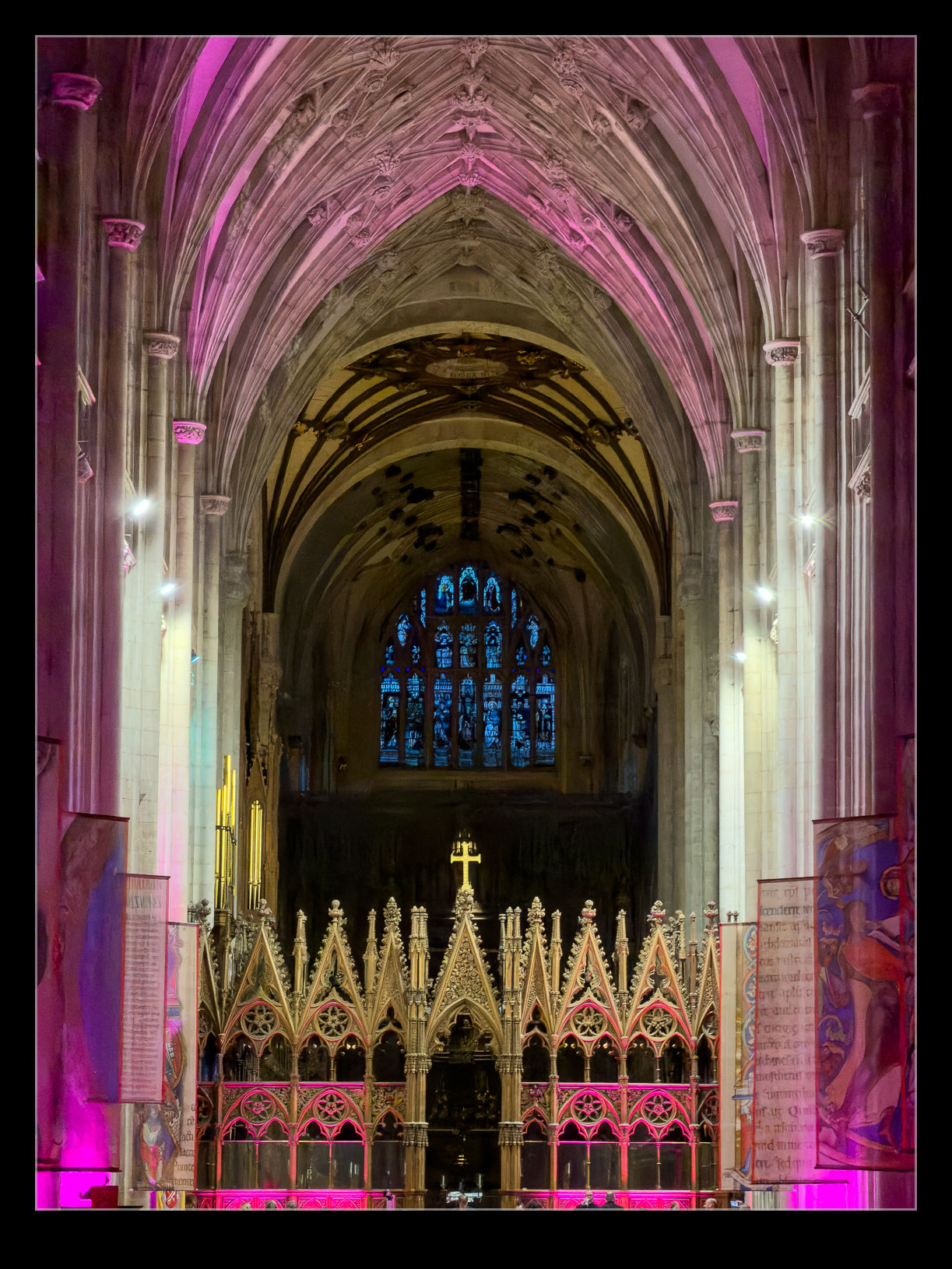

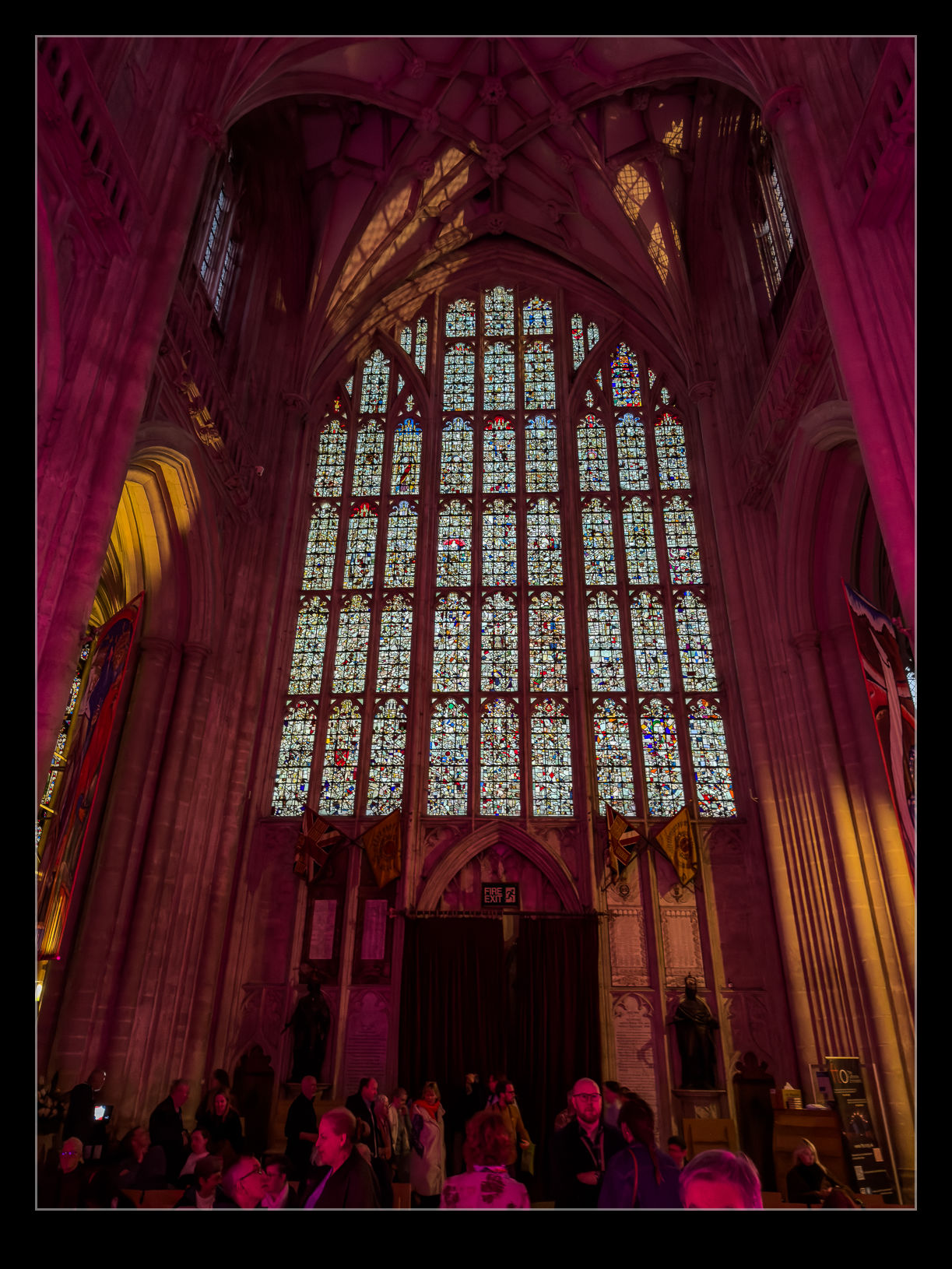

Night at the Cathedral

We bought tickets for an orchestral performance of The Planet Suite at Winchester Cathedral. It was still daylight when we went in before the start of the event but, by the time it was over, it was dark outside. The illumination on the cathedral was really nice and the ability of a modern phone to compile an image in those conditions still amazes me. I stitched together some shots to make this image. I also took a few inside the cathedral between the parts of the performance. Low light really does make for more interesting shots.

We bought tickets for an orchestral performance of The Planet Suite at Winchester Cathedral. It was still daylight when we went in before the start of the event but, by the time it was over, it was dark outside. The illumination on the cathedral was really nice and the ability of a modern phone to compile an image in those conditions still amazes me. I stitched together some shots to make this image. I also took a few inside the cathedral between the parts of the performance. Low light really does make for more interesting shots.

Reprocessing Some Backlit Shots from LAX

Every once in a while, I put together two things that I hadn’t previously connected. I have been playing around with the masking tools in Lightroom for ages to put different processing on aircraft versus the sky in the background. When I had done some photography from helicopters over LAX, the lighting had been good on the northern complex but the planes arriving and departing the south complex had been quite harshly backlit.

Every once in a while, I put together two things that I hadn’t previously connected. I have been playing around with the masking tools in Lightroom for ages to put different processing on aircraft versus the sky in the background. When I had done some photography from helicopters over LAX, the lighting had been good on the northern complex but the planes arriving and departing the south complex had been quite harshly backlit.

The processing approach I was using at that time did not make for very good results and so I had tended to ignore the shots I had taken on that side and focus on the north complex instead. Then, while looking at something from another photographer, it got me thinking that the masking tools would be a good option to revisit these backlit shots and try and get a more balanced looking image.

You can’t escape the fact that, if the original shot is not great, you aren’t ever going to turn it into something marvellous. However, there is the potential to come up with something significantly better than I had previously managed.

You can’t escape the fact that, if the original shot is not great, you aren’t ever going to turn it into something marvellous. However, there is the potential to come up with something significantly better than I had previously managed.

Selecting the airframe with a more cluttered background is a bit tougher for the automated tools so a fair bit of manual addition and subtraction was needed. However, because you are against a ground background rather than a sky, there is a certain amount of tolerance that you have for not getting the selection absolutely perfect. You don’t want glaring issues, but it won’t be as conspicuous as it is with a sky behind.

With the masking applied, it is a lot easier to come up with an exposure for the planes that looks a lot more like the eye would have perceived whilst still having a background that is okay. I can actually darken it a bit more in order to make the plane pop. On one of the shots, there was a second plane on the taxiway in the shot, so I selected it separately to give it a reasonable look without it taking over the image as a whole. This was a very satisfying process with some images I had previously left alone.

With the masking applied, it is a lot easier to come up with an exposure for the planes that looks a lot more like the eye would have perceived whilst still having a background that is okay. I can actually darken it a bit more in order to make the plane pop. On one of the shots, there was a second plane on the taxiway in the shot, so I selected it separately to give it a reasonable look without it taking over the image as a whole. This was a very satisfying process with some images I had previously left alone.

Gear and Flaps

Photographing at Heathrow means you get a steady stream of planes with barely a minute passing without another one landing. You can end up with a ton of similar shots. That got me thinking about other things I would like. A close up of the undercarriage and perhaps the flap system came to mind. For some reason – possibly the noise that the bursting vortices made after they landed – I decided that the 777-300ERs would be the ones I tried these shots with. However, an A380 did sneak in.

Photographing at Heathrow means you get a steady stream of planes with barely a minute passing without another one landing. You can end up with a ton of similar shots. That got me thinking about other things I would like. A close up of the undercarriage and perhaps the flap system came to mind. For some reason – possibly the noise that the bursting vortices made after they landed – I decided that the 777-300ERs would be the ones I tried these shots with. However, an A380 did sneak in.

There is something about the mass of machinery that you get around the main landing gear and the inboard flaps that seems so complex. Of course, this is all under the wing so the lighting is less than ideal, but you get what you can. Just before sunset would be perfect but you don’t get to choose when the jets land. Here are a few of my favourite shots from that part of the afternoon shoot.

There is something about the mass of machinery that you get around the main landing gear and the inboard flaps that seems so complex. Of course, this is all under the wing so the lighting is less than ideal, but you get what you can. Just before sunset would be perfect but you don’t get to choose when the jets land. Here are a few of my favourite shots from that part of the afternoon shoot.

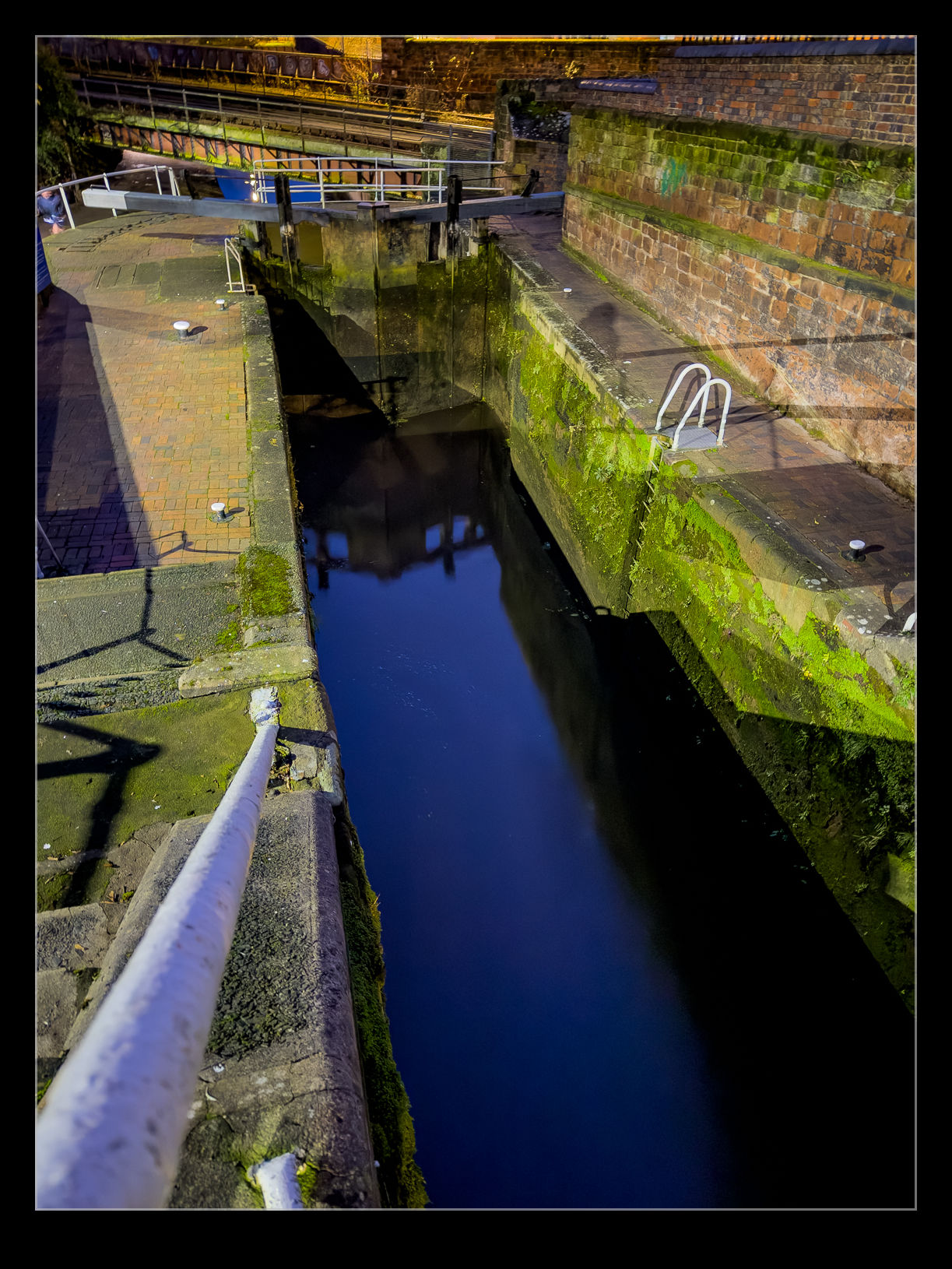

The Deep and Scary Locks

During my evening in Chester, I walked along the city walls until I came to a place where the walls met the railway and the canal. This is a place where the terrain drops off quite quickly and, in order for the canal to make the descent, there is a pair of locks with a very deep drop. It was very dark in this area at night and there was no lighting. Nor was there any fencing around the locks. Consequently, I was very cautious as I explored them.

During my evening in Chester, I walked along the city walls until I came to a place where the walls met the railway and the canal. This is a place where the terrain drops off quite quickly and, in order for the canal to make the descent, there is a pair of locks with a very deep drop. It was very dark in this area at night and there was no lighting. Nor was there any fencing around the locks. Consequently, I was very cautious as I explored them.

This was where modern camera technology came to my aid. I could see very little of what was around me, even as my eyes had adjusted to the low light conditions. My phone, on the other hand, did a phenomenal job of picking up the faint light that there was and stabilising the image to build up a usable shot. I can see things in these shots that I had no sight of at the time. I would like to go back in the day to see the locks in more detail. I did figure that, given how deep they were, you could come a cropper in there really easily if you weren’t careful.

This was where modern camera technology came to my aid. I could see very little of what was around me, even as my eyes had adjusted to the low light conditions. My phone, on the other hand, did a phenomenal job of picking up the faint light that there was and stabilising the image to build up a usable shot. I can see things in these shots that I had no sight of at the time. I would like to go back in the day to see the locks in more detail. I did figure that, given how deep they were, you could come a cropper in there really easily if you weren’t careful.

Revisiting Boneyard Tour Shots With Reflection Removal

I have been a bit critical of the reflection removal tool in Lightroom but, while it seems to have become less effective on some shots, it still can do the job on others. This got me thinking back to my visit to Davis Monthan AFB’s storage facilities in the days when the Pima Museum was still able to operate a bus tour of the rows of stored aircraft.

I have been a bit critical of the reflection removal tool in Lightroom but, while it seems to have become less effective on some shots, it still can do the job on others. This got me thinking back to my visit to Davis Monthan AFB’s storage facilities in the days when the Pima Museum was still able to operate a bus tour of the rows of stored aircraft.

I tried my best to get clear shots through the windows of the bus and often did okay. However, when something of interest was on the opposite side, I was taking a lot more chances when trying to get a shot without any reflections in it. A friend of mine, Karl, regularly posts images from the day and month many years before and he recently had some DM shots, and this was what triggered this idea. I worked my way through some of the original shots that I wouldn’t have previously used because of the reflections. I managed to rework some of them to make something far more usable.

I tried my best to get clear shots through the windows of the bus and often did okay. However, when something of interest was on the opposite side, I was taking a lot more chances when trying to get a shot without any reflections in it. A friend of mine, Karl, regularly posts images from the day and month many years before and he recently had some DM shots, and this was what triggered this idea. I worked my way through some of the original shots that I wouldn’t have previously used because of the reflections. I managed to rework some of them to make something far more usable.

Flame Details in the Bonfire

Bonfire night celebrations in Winchester included a big fire and fireworks demonstration in Wall Park. The bonfire was started in dramatic (i.e. fake) fashion with two cannons firing towards it and it igniting. It was quite a spectacle although clearly a bit contrived. The whole thing is contrived of course. As the fire got established, I found myself entranced by the flames. Watching fire is like watching the waves. Every one is slightly different and I can spend a ridiculous amount of time just staring at it.

Bonfire night celebrations in Winchester included a big fire and fireworks demonstration in Wall Park. The bonfire was started in dramatic (i.e. fake) fashion with two cannons firing towards it and it igniting. It was quite a spectacle although clearly a bit contrived. The whole thing is contrived of course. As the fire got established, I found myself entranced by the flames. Watching fire is like watching the waves. Every one is slightly different and I can spend a ridiculous amount of time just staring at it.

Getting images that actually reflect what I saw is something I haven’t practised so I was winging it a bit. The brightness of the most intense parts of the flames is so much more than the cooler areas that it is hard to reflect the detail that can be seen without blowing everything out. I went with underexposing to try and show more of what was interesting to me. Then I would look up at the hot particles above the fire as they rose and dispersed. They looked beautiful as they swirled around and cooled.

Getting images that actually reflect what I saw is something I haven’t practised so I was winging it a bit. The brightness of the most intense parts of the flames is so much more than the cooler areas that it is hard to reflect the detail that can be seen without blowing everything out. I went with underexposing to try and show more of what was interesting to me. Then I would look up at the hot particles above the fire as they rose and dispersed. They looked beautiful as they swirled around and cooled.

Reworking an Old Shot with Modern Denoise

Periodically, when thinking about the latest processing tools that I have available, it takes me back to some older shots that would be interesting to rework. This shot of one of the Blue Angels jets was taken at NAS Oceana during one of their air shows. I was shooting with the 1D Mk IIN and at ISO 800. At the time, this was a really high ISO and resulted in a lot of noise in the images. (As an aside, I did find that printing did not show the noise at anything like the level that was apparent on screen.) Even without the denoise function, the latest raw convertor makes a decent job of the file but I figured I would use the denoise too. I think the file comes out really cleanly as a result. It also helps that, as an 8MP file, the processing is a lot quicker!

Periodically, when thinking about the latest processing tools that I have available, it takes me back to some older shots that would be interesting to rework. This shot of one of the Blue Angels jets was taken at NAS Oceana during one of their air shows. I was shooting with the 1D Mk IIN and at ISO 800. At the time, this was a really high ISO and resulted in a lot of noise in the images. (As an aside, I did find that printing did not show the noise at anything like the level that was apparent on screen.) Even without the denoise function, the latest raw convertor makes a decent job of the file but I figured I would use the denoise too. I think the file comes out really cleanly as a result. It also helps that, as an 8MP file, the processing is a lot quicker!

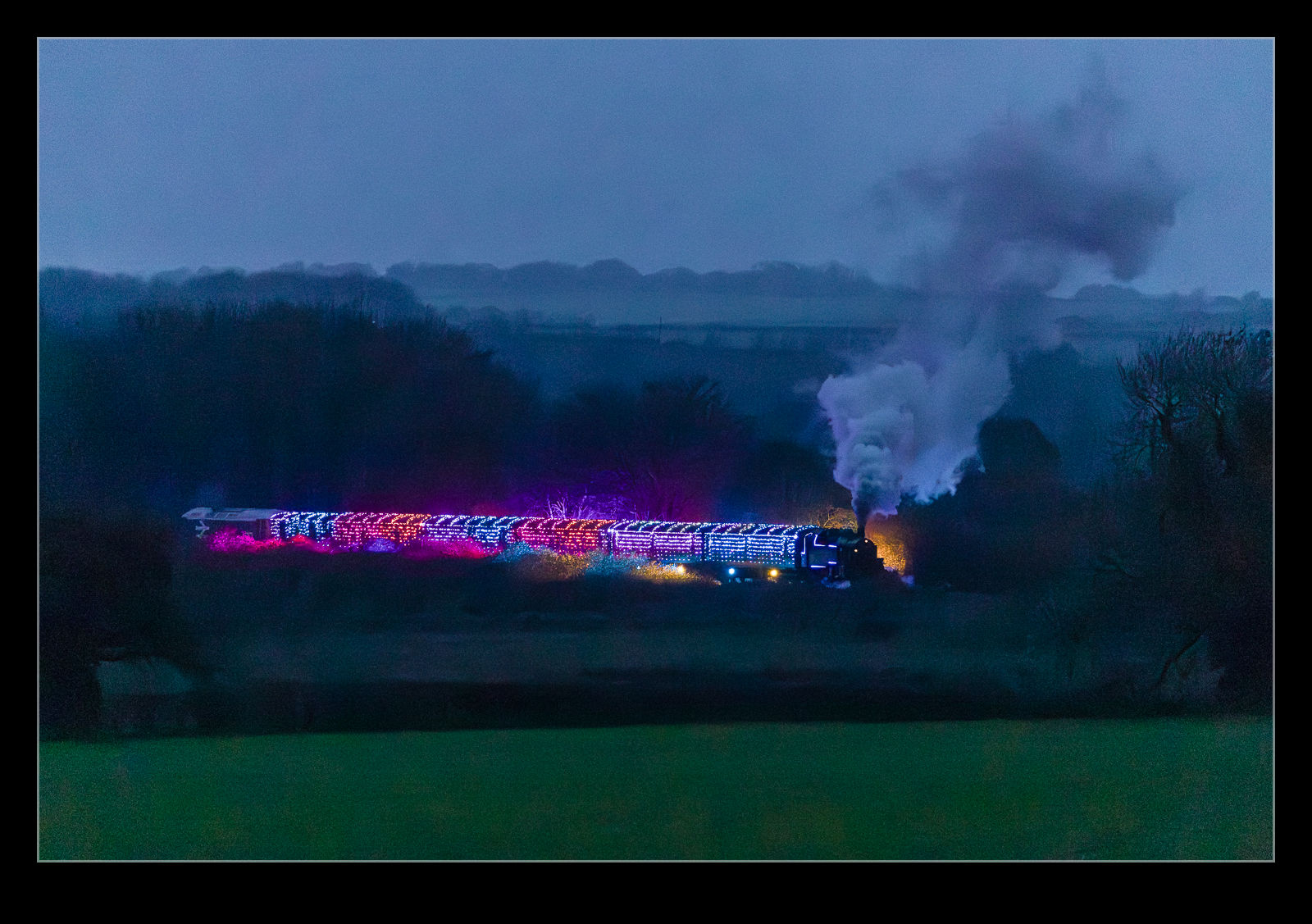

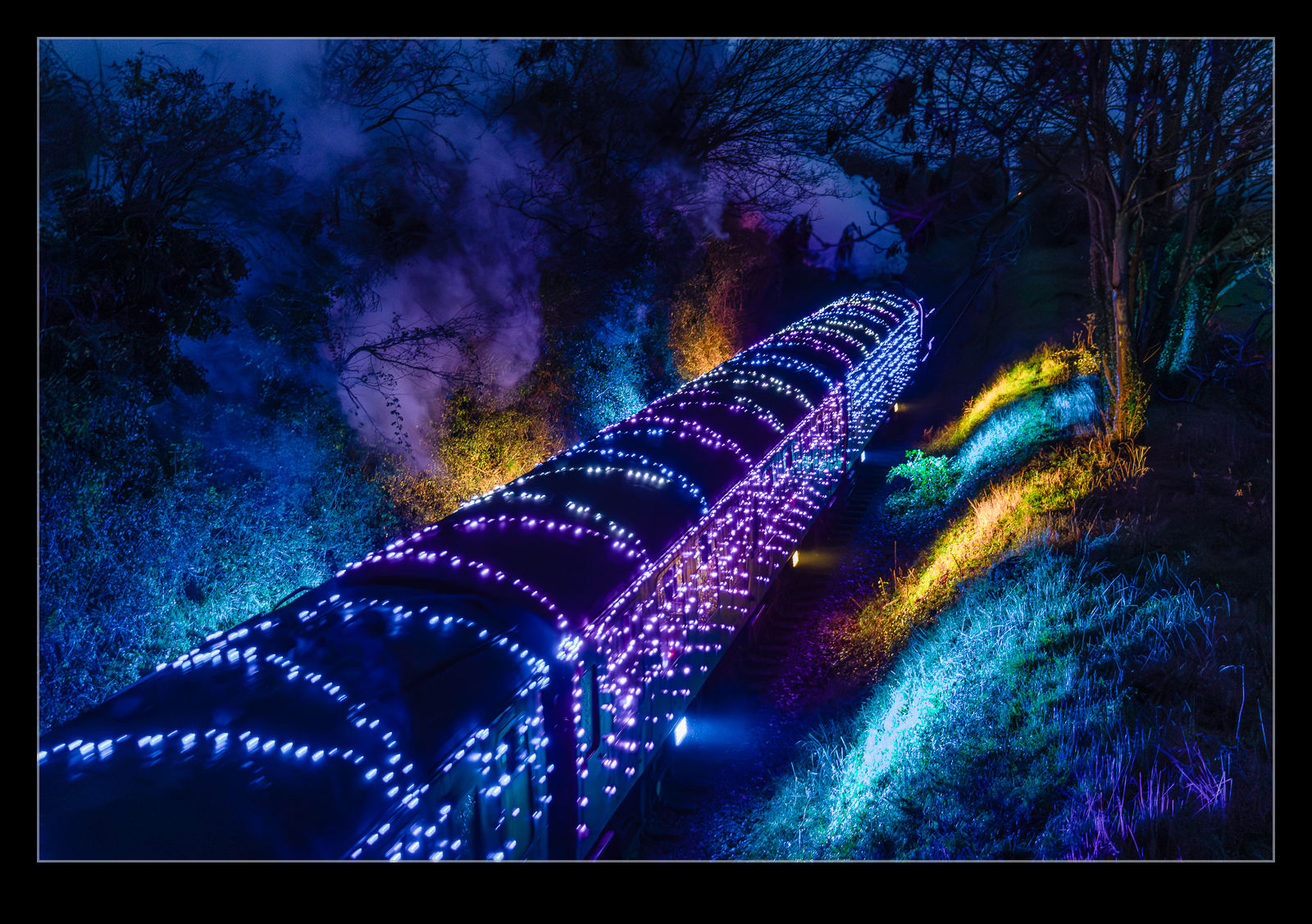

Lighting the Christmas Train

Get to the run up to Christmas and, if you have a heritage railway near you, there is a good chance they will be advertising that they have illuminated trains in operation. The trains will have lights all across the outside and probably within the carriages too. I’m sure they are fun to ride on but, from my point of view, seeing the outside lights is more appealing than being inside.

Get to the run up to Christmas and, if you have a heritage railway near you, there is a good chance they will be advertising that they have illuminated trains in operation. The trains will have lights all across the outside and probably within the carriages too. I’m sure they are fun to ride on but, from my point of view, seeing the outside lights is more appealing than being inside.

The Watercress Line is close to Winchester, and they had an illuminated service. In fact, they had more than one. My mum was visiting, and she was also interested in the lights so, late in the afternoon, since it was already getting dark at that time, we popped out to see the train go by. Sure enough, we soon saw it coming up the hill out of Alresford. There is a long stretch where the trees have been trimmed back when you get a good view of it coming our way. Even with the lights, the exposure is still a stretch for the camera. It did okay, though, and a bit of noise reduction software helps.

The Watercress Line is close to Winchester, and they had an illuminated service. In fact, they had more than one. My mum was visiting, and she was also interested in the lights so, late in the afternoon, since it was already getting dark at that time, we popped out to see the train go by. Sure enough, we soon saw it coming up the hill out of Alresford. There is a long stretch where the trees have been trimmed back when you get a good view of it coming our way. Even with the lights, the exposure is still a stretch for the camera. It did okay, though, and a bit of noise reduction software helps.

As they came around the corner into the straight heading at us, the lights would illuminate the embankment on either side of the cutting. There was also a strong yellow glow which, I assume, came from the firebox. The colours were constantly changing and it looked really impressive as the loco pulled hard up the bank. I think that they had swapped to a smaller loco because they had a diesel on the back of the train to support.

As they came around the corner into the straight heading at us, the lights would illuminate the embankment on either side of the cutting. There was also a strong yellow glow which, I assume, came from the firebox. The colours were constantly changing and it looked really impressive as the loco pulled hard up the bank. I think that they had swapped to a smaller loco because they had a diesel on the back of the train to support.

We were going to head straight home but one of the other people there told us there was a second train coming down from Alton a little while later. While it was getting a bit chilly and definitely dark, we figured there was no harm in hanging around. We did get the second train as it came down the cutting and then headed back the way the previous train had come. Going that way, they are going downhill so the loco is barely working to get them home. No plumes of smoke and thundering noise.

We were going to head straight home but one of the other people there told us there was a second train coming down from Alton a little while later. While it was getting a bit chilly and definitely dark, we figured there was no harm in hanging around. We did get the second train as it came down the cutting and then headed back the way the previous train had come. Going that way, they are going downhill so the loco is barely working to get them home. No plumes of smoke and thundering noise.

The Reflection Removal Tool Seems to Have Broken

When Adobe introduced the reflection removal tool, I was really impressed by its capabilities. I played with a number of shots that I had taken through windows, and they worked out really well. There have been some updates that Adobe has made, and it feels like it isn’t working as well as it should. I was at Glasgow Airport waiting for a flight home and an Emirates A380 was taxiing out so I grabbed a few shots through the glass. There was a really obvious reflection in the sky area of the shot. I figured that the tool would knock it out easily. Instead, it didn’t even recognise it was there. That is the first of these images. I tried a couple of different shots and none of them worked. I wonder what they have done to the tool that has made it struggle here. I ended up making manual selections and using the AI remove tool to try instead. It was okay but not a great result.

When Adobe introduced the reflection removal tool, I was really impressed by its capabilities. I played with a number of shots that I had taken through windows, and they worked out really well. There have been some updates that Adobe has made, and it feels like it isn’t working as well as it should. I was at Glasgow Airport waiting for a flight home and an Emirates A380 was taxiing out so I grabbed a few shots through the glass. There was a really obvious reflection in the sky area of the shot. I figured that the tool would knock it out easily. Instead, it didn’t even recognise it was there. That is the first of these images. I tried a couple of different shots and none of them worked. I wonder what they have done to the tool that has made it struggle here. I ended up making manual selections and using the AI remove tool to try instead. It was okay but not a great result.